2024

Lens + Feel: Reimagining Image Recognition as Emotional Support

A concept that reimagines Google Lens as an emotionally intelligent cultural companion, helping newcomers navigate unfamiliar social moments.

Context

For newcomers, everyday life in a foreign culture carries an invisible strain. Simple interactions like ordering food, greeting someone, or using public transport are layered with uncertainty.

Without cultural fluency, participation becomes cautious. Each moment involves interpretation: reading gestures, tone, proximity, and invisible social rules. Translation alone cannot resolve this emotional layer.

Problem

How might we design tools that address the emotional layer of cultural transition for newcomers in foreign countries, so that everyday interactions feel less stressful and more participatory?

Goals

The project approach aims to:

Help newcomers feel quietly confident, even in unfamiliar social moments.

Ease the constant hum of self-doubt and fear of judgment.

Make the possibility of mistakes feel safe and manageable.

Transform small, everyday interactions from tense moments into gentle victories.

Foster gradual cultural adaptation by helping users gradually navigate and integrate into the culture

Process

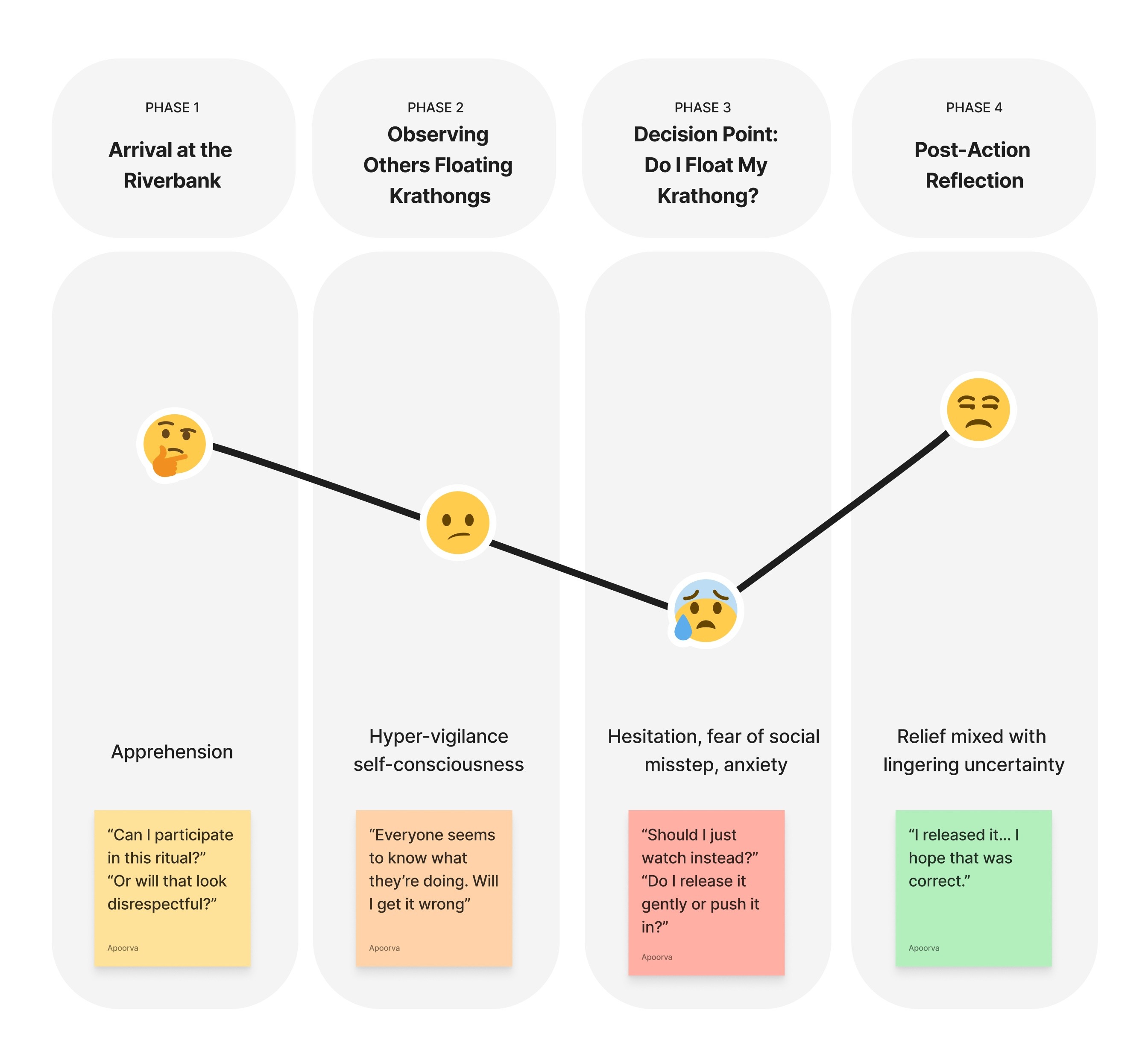

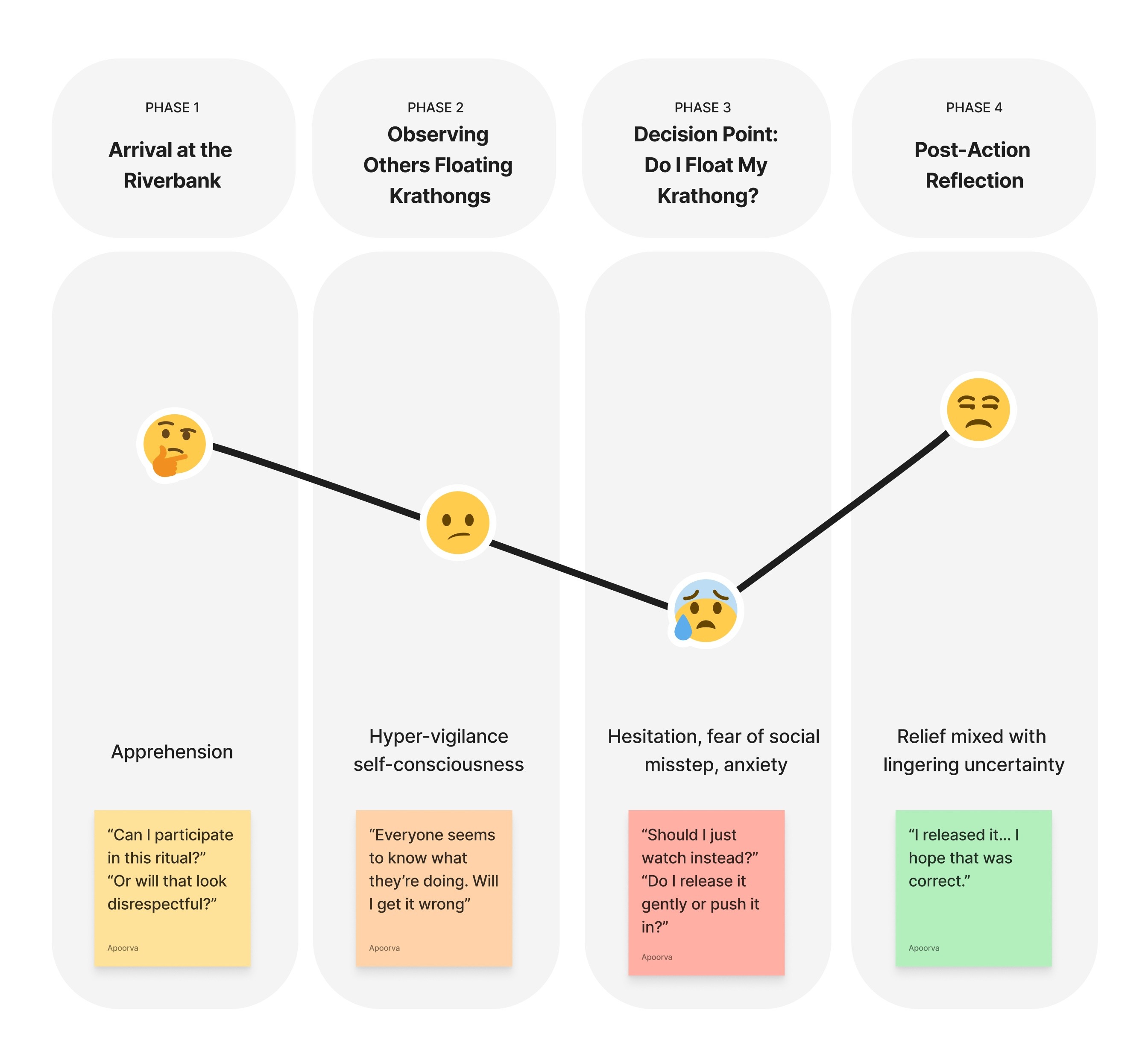

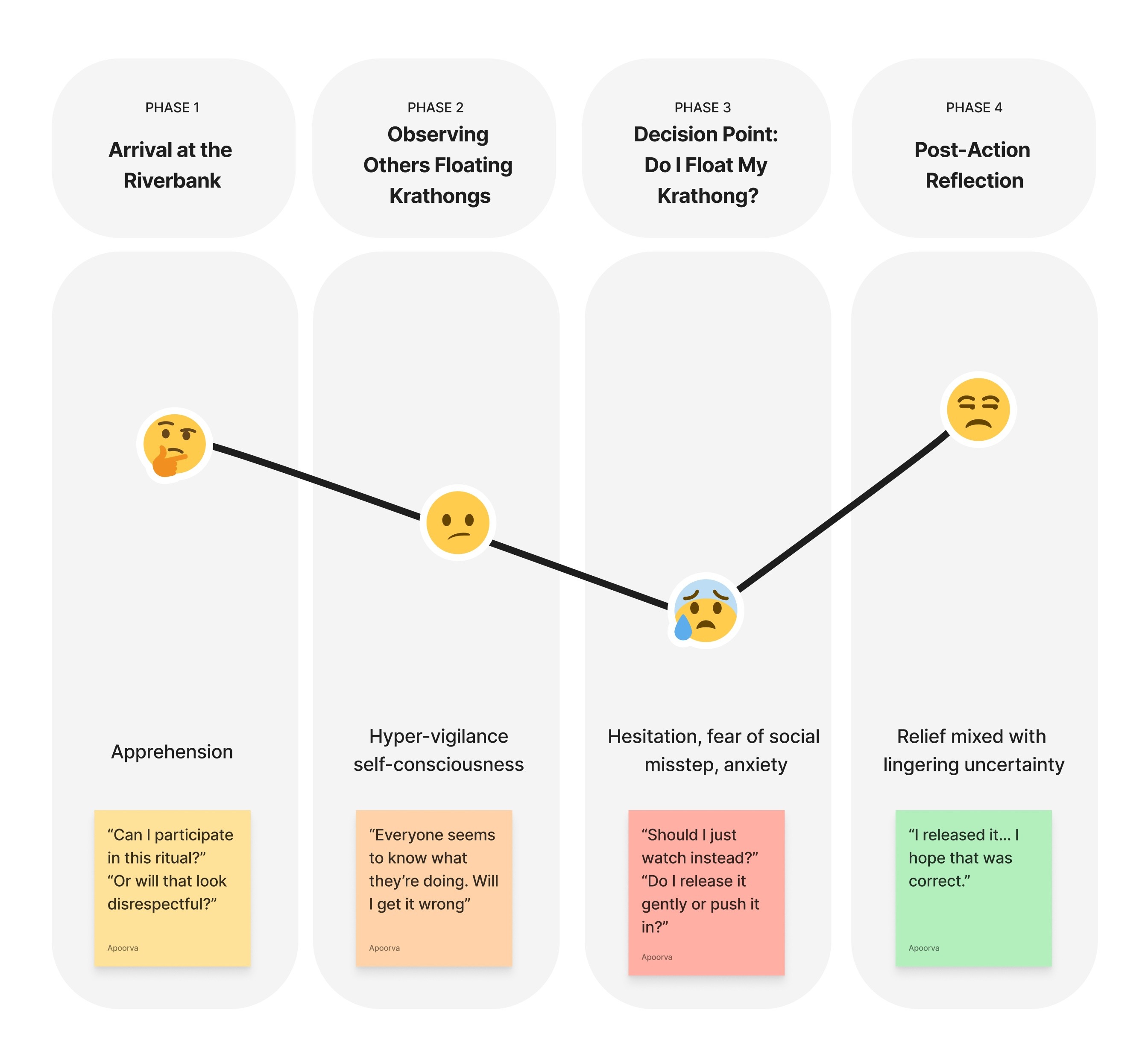

To understand the emotional impact of cultural uncertainty, I started by selecting a scenario that captures hesitation and fear of judgment: a newcomer floating their first krathong during the Loy Krathong festival (an annual Thai festival where people release small decorated baskets, called krathongs, onto rivers and waterways to pay respect, make wishes, and symbolically let go of misfortune).

Emotional Journey Mapping

The central insight of this project is that uncomfortable moments are not problems to eliminate; they are signals of unmet emotional needs.

Tools like image search or translation perform their functional task well: they identify, translate, and summarize.

But the tension remains, as it conveys what something is but not how to be in that situation.

Each negative emotion reveals something specific:

Hesitation → Need for contextual guidance.

Self-consciousness → Need for reassurance.

Fear of judgment → Need for normalization.

Post-interaction doubt → Need for reflective affirmation.

I aimed at reframing these moments as design opportunities.

Reframing Negative Moments as Opportunities

The central insight of this project is that uncomfortable moments are not problems to eliminate; they are signals of unmet emotional needs.

Tools like image search or translation perform their functional task well: they identify, translate, and summarize.

But the tension remains, as it conveys what something is but not how to be in that situation.

Each negative emotion reveals something specific:

Hesitation → Need for contextual guidance.

Self-consciousness → Need for reassurance.

Fear of judgment → Need for normalization.

Post-interaction doubt → Need for reflective affirmation.

I aimed at reframing these moments as design opportunities.

Prototyping — Google Lens: Feel Companion Mode

Using iterative prompting, I vibe-coded an early prototype of “Lens + Feel.”

This prototype reimagines Google Lens as an emotionally intelligent companion rather than just an object-recognition or translation tool.

Designed as a mobile-first experience, it supports people experiencing social anxiety while navigating unfamiliar cultural environments, and instead of simply identifying objects or translating text, the system responds to both the cultural situation and the user’s emotional state.

Initial Version: Claude

Idea Generation & Prototyping

Later Version: Figma Make

Images and UI Polish

Final Prototype

How the Experience Works

When a user points their camera at a culturally significant object or situation, the interface:

Identifies and translates it, showing local script, transliteration, and English meaning.

Provides contextual cultural explanation.

Prompts the user to select how they’re feeling through emotion-based “feel chips” or lets them express verbally through the voice assistant.

Delivers personalized, empathetic guidance based on that emotional input.

The interaction is designed to feel calm and supportive, less like a search engine and more like a trusted local companion who empathizes with you.

Users can ask follow-up questions using a built-in voice assistant, reducing friction during anxious moments. Responses can also be read aloud through text-to-speech, offering reassurance through tone as well as text.

If the moment feels meaningful, users can save it, capturing the scenario and their emotional state. These saved encounters appear in a Moments Log, allowing users to revisit experiences, talk to them further if required, reflect on how they handled them, and recognize their growing cultural confidence over time.

Potential Risks

Over-reliance: Users may depend on the tool rather than gradually internalizing cultural understanding.

Oversimplification: Cultural norms are fluid and contextual; simplified guidance may create false confidence.

Tone mismatch: If the assistant feels overly scripted or patronizing, reassurance may feel artificial.

Contextual inappropriateness: Using a phone in certain cultural or religious settings may itself increase self-consciousness.

Reflection

If I were to continue this work, I would:

Conduct interviews with newcomers from diverse cultural backgrounds to validate emotional patterns.

Test whether reassurance measurably reduces hesitation in real-time scenarios.

Explore adaptive guidance that gradually reduces dependency as confidence increases.

Ultimately, this project reinforced a key insight:

Cultural transition is not only cognitive, it is also emotional.

Design that acknowledges this layer can transform small, uncertain moments into quiet steps towards belonging.

More Works

2024

Lens + Feel: Reimagining Image Recognition as Emotional Support

Hi, I am Rosa® I’m a passionate and innovative 3D designer with over a decade of experience in the field. My journey began with a fascination.

Context

For newcomers, everyday life in a foreign culture carries an invisible strain. Simple interactions like ordering food, greeting someone, or using public transport are layered with uncertainty.

Without cultural fluency, participation becomes cautious. Each moment involves interpretation: reading gestures, tone, proximity, and invisible social rules. Translation alone cannot resolve this emotional layer.

Problem

How might we design tools that address the emotional layer of cultural transition for newcomers in foreign countries, so that everyday interactions feel less stressful and more participatory?

Goals

The project approach aims to:

Help newcomers feel quietly confident, even in unfamiliar social moments.

Ease the constant hum of self-doubt and fear of judgment.

Make the possibility of mistakes feel safe and manageable.

Transform small, everyday interactions from tense moments into gentle victories.

Foster gradual cultural adaptation by helping users gradually navigate and integrate into the culture

Process

To understand the emotional impact of cultural uncertainty, I started by selecting a scenario that captures hesitation and fear of judgment: a newcomer floating their first krathong during the Loy Krathong festival (an annual Thai festival where people release small decorated baskets, called krathongs, onto rivers and waterways to pay respect, make wishes, and symbolically let go of misfortune).

Emotional Journey Mapping

The central insight of this project is that uncomfortable moments are not problems to eliminate; they are signals of unmet emotional needs.

Tools like image search or translation perform their functional task well: they identify, translate, and summarize.

But the tension remains, as it conveys what something is but not how to be in that situation.

Each negative emotion reveals something specific:

Hesitation → Need for contextual guidance.

Self-consciousness → Need for reassurance.

Fear of judgment → Need for normalization.

Post-interaction doubt → Need for reflective affirmation.

I aimed at reframing these moments as design opportunities.

Reframing Negative Moments as Opportunities

The central insight of this project is that uncomfortable moments are not problems to eliminate; they are signals of unmet emotional needs.

Tools like image search or translation perform their functional task well: they identify, translate, and summarize.

But the tension remains, as it conveys what something is but not how to be in that situation.

Each negative emotion reveals something specific:

Hesitation → Need for contextual guidance.

Self-consciousness → Need for reassurance.

Fear of judgment → Need for normalization.

Post-interaction doubt → Need for reflective affirmation.

I aimed at reframing these moments as design opportunities.

Prototyping — Google Lens: Feel Companion Mode

Using iterative prompting, I vibe-coded an early prototype of “Lens + Feel.”

This prototype reimagines Google Lens as an emotionally intelligent companion rather than just an object-recognition or translation tool.

Designed as a mobile-first experience, it supports people experiencing social anxiety while navigating unfamiliar cultural environments, and instead of simply identifying objects or translating text, the system responds to both the cultural situation and the user’s emotional state.

Initial Version: Claude

Idea Generation & Prototyping

Later Version: Figma Make

Images and UI Polish

Final Prototype

How the Experience Works

When a user points their camera at a culturally significant object or situation, the interface:

Identifies and translates it, showing local script, transliteration, and English meaning.

Provides contextual cultural explanation.

Prompts the user to select how they’re feeling through emotion-based “feel chips” or lets them express verbally through the voice assistant.

Delivers personalized, empathetic guidance based on that emotional input.

The interaction is designed to feel calm and supportive, less like a search engine and more like a trusted local companion who empathizes with you.

Users can ask follow-up questions using a built-in voice assistant, reducing friction during anxious moments. Responses can also be read aloud through text-to-speech, offering reassurance through tone as well as text.

If the moment feels meaningful, users can save it, capturing the scenario and their emotional state. These saved encounters appear in a Moments Log, allowing users to revisit experiences, talk to them further if required, reflect on how they handled them, and recognize their growing cultural confidence over time.

Potential Risks

Over-reliance: Users may depend on the tool rather than gradually internalizing cultural understanding.

Oversimplification: Cultural norms are fluid and contextual; simplified guidance may create false confidence.

Tone mismatch: If the assistant feels overly scripted or patronizing, reassurance may feel artificial.

Contextual inappropriateness: Using a phone in certain cultural or religious settings may itself increase self-consciousness.

Reflection

If I were to continue this work, I would:

Conduct interviews with newcomers from diverse cultural backgrounds to validate emotional patterns.

Test whether reassurance measurably reduces hesitation in real-time scenarios.

Explore adaptive guidance that gradually reduces dependency as confidence increases.

Ultimately, this project reinforced a key insight:

Cultural transition is not only cognitive, it is also emotional.

Design that acknowledges this layer can transform small, uncertain moments into quiet steps towards belonging.

More Works

2024

Lens + Feel: Reimagining Image Recognition as Emotional Support

Hi, I am Quinn® I’m a passionate and innovative 3D designer with over a decade of experience in the field. My journey began with a fascination.

Context

For newcomers, everyday life in a foreign culture carries an invisible strain. Simple interactions like ordering food, greeting someone, or using public transport are layered with uncertainty.

Without cultural fluency, participation becomes cautious. Each moment involves interpretation: reading gestures, tone, proximity, and invisible social rules. Translation alone cannot resolve this emotional layer.

Problem

How might we design tools that address the emotional layer of cultural transition for newcomers in foreign countries, so that everyday interactions feel less stressful and more participatory?

Goals

The project approach aims to:

Help newcomers feel quietly confident, even in unfamiliar social moments.

Ease the constant hum of self-doubt and fear of judgment.

Make the possibility of mistakes feel safe and manageable.

Transform small, everyday interactions from tense moments into gentle victories.

Foster gradual cultural adaptation by helping users gradually navigate and integrate into the culture

Process

To understand the emotional impact of cultural uncertainty, I started by selecting a scenario that captures hesitation and fear of judgment: a newcomer floating their first krathong during the Loy Krathong festival (an annual Thai festival where people release small decorated baskets, called krathongs, onto rivers and waterways to pay respect, make wishes, and symbolically let go of misfortune).

Emotional Journey Mapping

The central insight of this project is that uncomfortable moments are not problems to eliminate; they are signals of unmet emotional needs.

Tools like image search or translation perform their functional task well: they identify, translate, and summarize.

But the tension remains, as it conveys what something is but not how to be in that situation.

Each negative emotion reveals something specific:

Hesitation → Need for contextual guidance.

Self-consciousness → Need for reassurance.

Fear of judgment → Need for normalization.

Post-interaction doubt → Need for reflective affirmation.

I aimed at reframing these moments as design opportunities.

Reframing Negative Moments as Opportunities

The central insight of this project is that uncomfortable moments are not problems to eliminate; they are signals of unmet emotional needs.

Tools like image search or translation perform their functional task well: they identify, translate, and summarize.

But the tension remains, as it conveys what something is but not how to be in that situation.

Each negative emotion reveals something specific:

Hesitation → Need for contextual guidance.

Self-consciousness → Need for reassurance.

Fear of judgment → Need for normalization.

Post-interaction doubt → Need for reflective affirmation.

I aimed at reframing these moments as design opportunities.

Prototyping — Google Lens: Feel Companion Mode

Using iterative prompting, I vibe-coded an early prototype of “Lens + Feel.”

This prototype reimagines Google Lens as an emotionally intelligent companion rather than just an object-recognition or translation tool.

Designed as a mobile-first experience, it supports people experiencing social anxiety while navigating unfamiliar cultural environments, and instead of simply identifying objects or translating text, the system responds to both the cultural situation and the user’s emotional state.

Initial Version: Claude

Idea Generation & Prototyping

Later Version: Figma Make

Images and UI Polish

Final Prototype

How the Experience Works

When a user points their camera at a culturally significant object or situation, the interface:

Identifies and translates it, showing local script, transliteration, and English meaning.

Provides contextual cultural explanation.

Prompts the user to select how they’re feeling through emotion-based “feel chips” or lets them express verbally through the voice assistant.

Delivers personalized, empathetic guidance based on that emotional input.

The interaction is designed to feel calm and supportive, less like a search engine and more like a trusted local companion who empathizes with you.

Users can ask follow-up questions using a built-in voice assistant, reducing friction during anxious moments. Responses can also be read aloud through text-to-speech, offering reassurance through tone as well as text.

If the moment feels meaningful, users can save it, capturing the scenario and their emotional state. These saved encounters appear in a Moments Log, allowing users to revisit experiences, talk to them further if required, reflect on how they handled them, and recognize their growing cultural confidence over time.

Potential Risks

Over-reliance: Users may depend on the tool rather than gradually internalizing cultural understanding.

Oversimplification: Cultural norms are fluid and contextual; simplified guidance may create false confidence.

Tone mismatch: If the assistant feels overly scripted or patronizing, reassurance may feel artificial.

Contextual inappropriateness: Using a phone in certain cultural or religious settings may itself increase self-consciousness.

Reflection

If I were to continue this work, I would:

Conduct interviews with newcomers from diverse cultural backgrounds to validate emotional patterns.

Test whether reassurance measurably reduces hesitation in real-time scenarios.

Explore adaptive guidance that gradually reduces dependency as confidence increases.

Ultimately, this project reinforced a key insight:

Cultural transition is not only cognitive, it is also emotional.

Design that acknowledges this layer can transform small, uncertain moments into quiet steps towards belonging.

More Works